While the vehicle is on the move, there are many other objects around it. These objects may or may not affect the vehicle’s route. Static objects can be localization waypoints or obstacles, while moving objects can result in a collision. In order to correctly determine the type of object in the vehicle’s environment, it must be properly identified.

Examples of static objects include buildings, road signs, curbs, roads or intersections, while dynamic objects include other cars, people or animals.

Objects can be identified based on their:

- location

- 3D model (from lidar)

- motion trajectory

- 2D image (e.g., from a camera)

Classification of objects

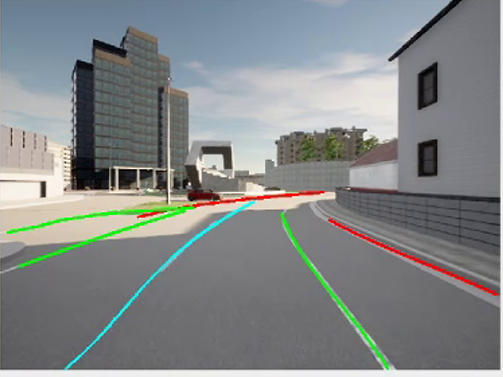

Recognition of road edges and road markings

For example, if the object’s positioning on the map and its current positioning relative to the vehicle are known, it can be identified whether a static object such as a building is present at a given location. Similarly, if car models are available, they can be used to identify nearby vehicles.

Object identification is necessary for the vehicle to avoid collisions with other objects, and since the vehicle’s environment is full of them, it must be determined which of them may be on a collision course. Tracking all objects in the environment can be too computationally intensive. That is why tracking is usually limited only to potentially colliding objects. For example, a vehicle in a dense environment (e.g., on a traffic circle in Paris or a road in Barcelona) must track multiple objects in its environment even at the expense of limiting its maximum speed so that it can respond to a threat sooner. On the other hand, treating all objects as static may result in a collision with a walking pedestrian or a moving vehicle.

Object detection requires the use of appropriate algorithms, which can be divided into two groups:

- classical

- deep learning.

Despite being less resource intensive, classical algorithms are rarely used due to their implementation time and often lower the effectiveness of object identification. That is why currently used algorithms are mostly based on deep learning. Classical algorithms are mainly used for fusing data from different sensors.

The object identification effectiveness of individual sensors can be improved by integrating them, thus obtaining a single coherent object. For example, if a lidar’s or camera’s neural network identifies an object as a vehicle, then after the fusion it can be identified as a car with high probability, but if the lidar classifies the object as a truck, while the camera classifies it as a building, it means the object could have been identified incorrectly by one or even both sensors. In this case, the fusion algorithm must make a choice or classify the object as “unidentified”.

Deep learning algorithms trained using representative datasets can classify an object correctly with high accuracy, and then pass it on for analysis. Identified moving objects (a human, a truck or a car) are often passed on to object tracking modules, while static objects (e.g., road signs) are identified and interpreted by the autonomous system to determine (or modify) the vehicle’s movement parameters.